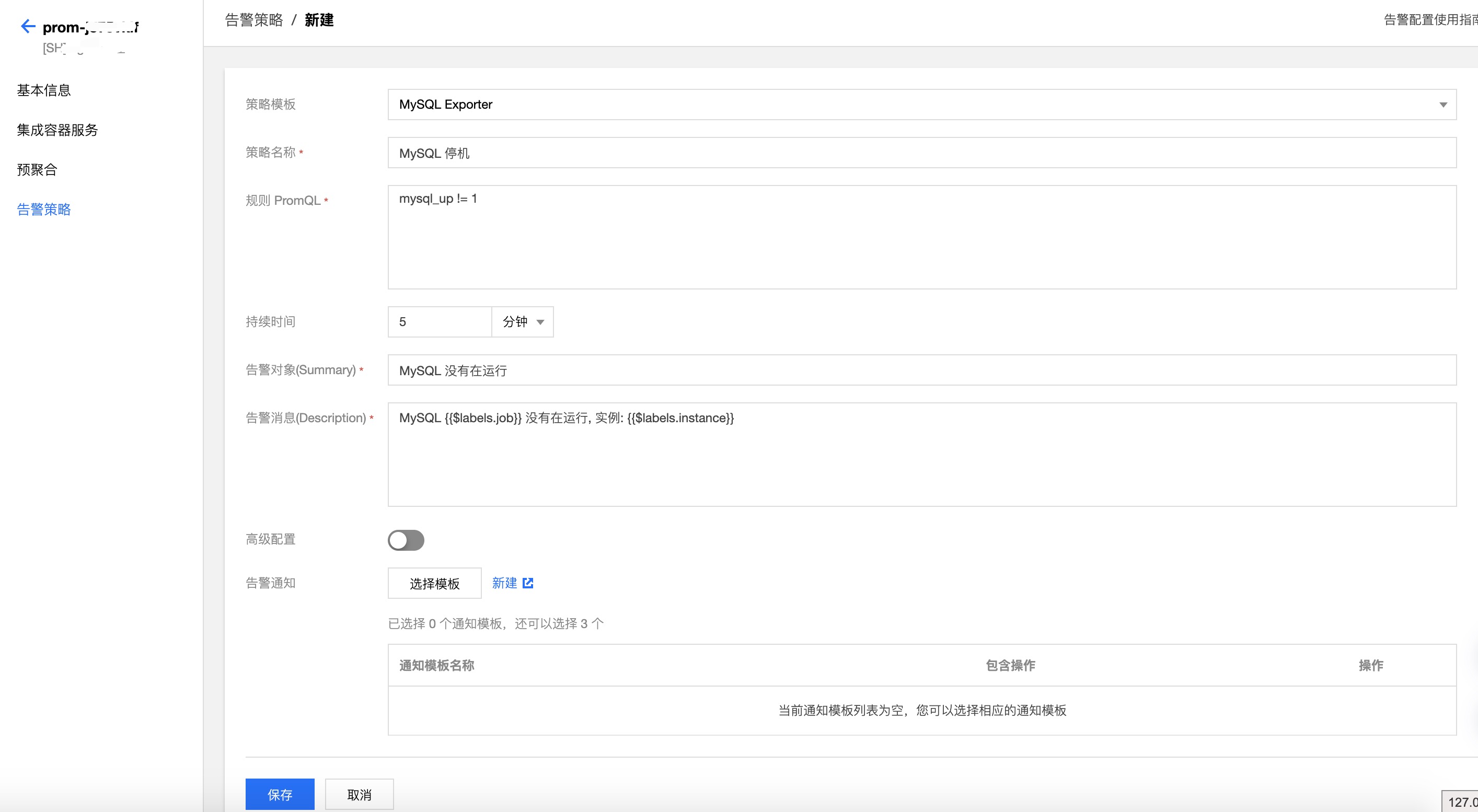

To check if Prometheus is successfully monitoring the instances, open the Prometheus UI in the browser and visit the /targets URI to check the status of the PodMonitor under podMonitor/prometheus/vmware-mysql-instances/0 (1/1 up).Ĭlick here to view a larger version of this diagramįor details on the PodMonitor API refer to PodMonitor in the Prometheus Operator documentation.ĭuring the VMware MySQL initialization, the VMware MySQL Operator creates a metrics related self-signed certificate. set prometheus-node-exporter.hostRootFsMount=false \Ĭonfirm the PodMonitor CRD exists using: kubectl get Ĭreate a PodMonitor that scrapes all MySQL instances every 10 seconds and skips verifying TLS: cat <<EOF | kubectl apply -f. set grafana.enabled=false,alertmanager.enabled=false \ helm install prometheus-community/kube-prometheus-stack \ The PodMonitor CRD defines the configuration details for the MySQL pod monitoring. Use Helm to install the Prometheus Operator, that defines the custom resource definitions (CRDs) such as PodMonitor. This section provides an example of installing the Prometheus Operator using Helm, and a example Prometheus PodMonitor CRD that is used to scrape the MySQL metrics. Prometheus Operator allows you to define and manage monitoring instances as Kubernetes resources. Using Prometheus Operator to Scrape the VMware MySQL Operator Metrics

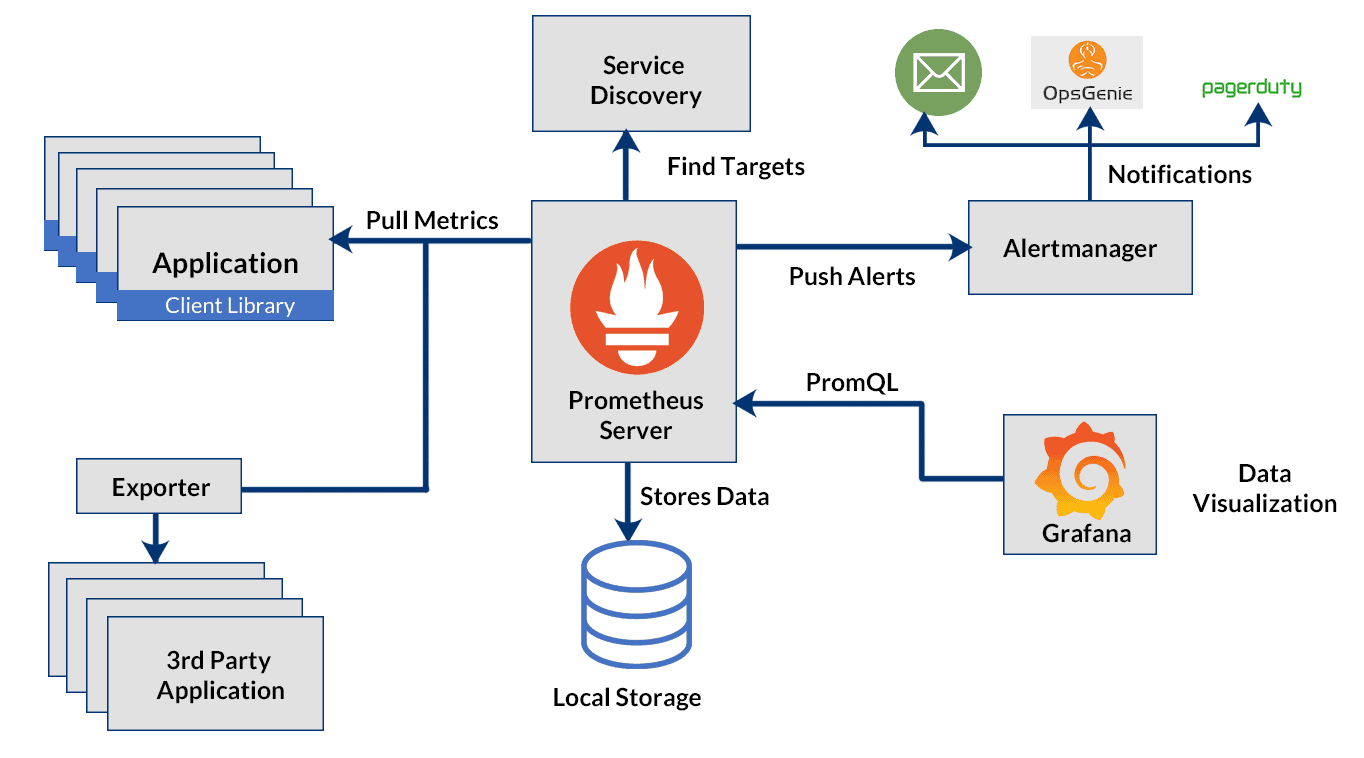

To test that the metrics are being emitted, you may use port forwarding (for more details see Use Port Forwarding to Access Applications in a Cluster in the Kubernetes documentation): kubectl port-forward pod/ 9104:9104Īnd then in another shell, use a tool like curl to run: curl -k The output shows the metrics collection, for example: mysql_global_status_open_tables 165įor a list of the exposed VMware MySQL metrics collectors see MySQL Server Exporter Collector Flag Reference. MySQL pods include the exporter that emits metrics by default. For an example installation of Prometheus, see Using Prometheus Operator to Scrape the VMware MySQL Operator Metrics. To take advantage of the metrics endpoint, ensure you have a metrics collector in your environment like Prometheus, or Wavefront. Prometheus could be your primary consumer of the metrics, but any monitoring tool can take advantage of the /metrics endpoint. The diagram below shows the architecture of a single-node MySQL instance with MySQL server exporter, where the metrics are exported on port 9104:

The exporter queries the MySQL database and provides metrics in the Prometheus format on a /metrics https endpoint (port 9104) on the pod, conforming to the Prometheus HTTP API. Prometheus sends HTTPS requests to the exporter. Upon initialization, each MySQL pod adds a MySQL server exporter container. The MySQL Server Exporter shares metrics about the MySQL instances. The Prometheus exporter provides an endpoint for Prometheus to scrape metrics from different application services. VMware MySQL Operator uses the MySQL Server Exporter, a Prometheus exporter for MySQL server metrics. It may bring more complexity when we have to deal with two different things at the same time(without subordinate) - but this also happen in subordinate pattern.This topic describes how to collect metrics and monitor VMware SQL with MySQL for Kubernetes instances in a Kubernetes cluster. Putting them together in the same project, so we can cover it with testing.Ĥ. There may be a version management issue when exporter lives outside of the principle charmĢ.1 If we shipped the principle charm without the exporter compatibility fixed, and therefore operator could't upgrade to the newer principle or they'd lose the monitoring. The mysql prometheus exporter charm is not generic, we won't relate it to another charmĢ.2 The exporter will live with the principle charm, the objective is to be able to just juju deploy mysql and then relate mysql and the prometheus charm itselfģ. As Billy mention, the charm should include the monitor logic itself.Ģ.1. That way, when you deploy mysql you'll get not only mysql but you'll also get a solid way on how to monitor that mysql cluster, you'll get operational semantics on alerts that are generally important and actions that allow you to simplify the operations (like backup, etc, etc)ġ.1 once you start pulling the monitoring pieces out of the charm, you start to lose the operations bitsĢ. Charms are supposed to be devops distilled, meaning encompassing best practice operations into the charms themselves. More information about why don't use subordinate charm.ġ.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed